New research from the Institute of Strategic Dialogue (ISD), a human rights nonprofit that seeks to combat hate speech and misinformation, has found that YouTube fails to enforce community guidelines on a side of the platform that casual users may not even know about.

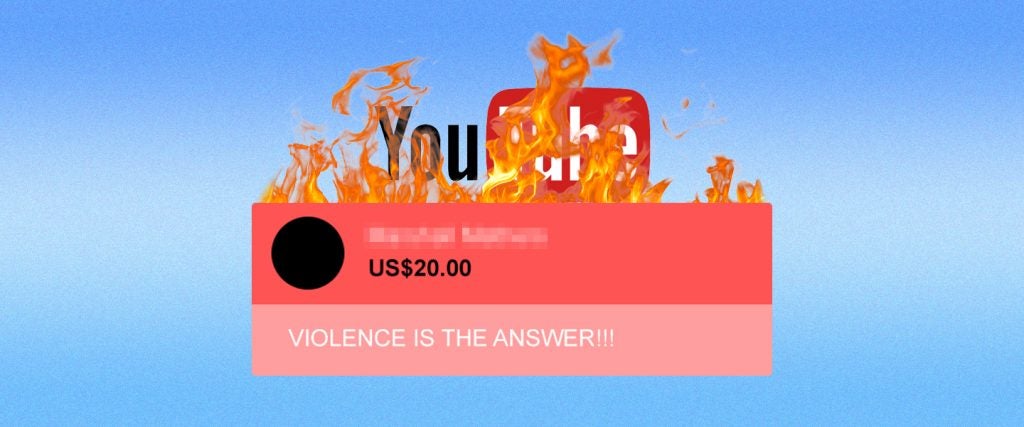

“Super Chat,” as the ISD report explains, is a five-year-old feature that allows creators to monetize engagement from viewers, who can pay to upload live chat messages that will be prominently displayed to the rest of the audience for that stream. Depending on how much you’re willing to spend, you can even pin your message to the top of a chat feed.

Mounting case studies on YouTuber and political commentator Tim Pool’s Timcast IRL program, as well as the Right Side Broadcasting Network and The Young Turks, analyst Ciarán O’Connor found hundreds of instances in which cash was traded for the opportunity to post remarks that violate YouTube policy: misinformation about the 2020 presidential election and COVID-19, incitements to violence during the January 6th Capitol riot and hate speech directed at the Black Lives Matter movement. YouTube, which takes a 30 percent cut of those Super Chat sales, netted thousands of dollars from such content. Meanwhile, many broadcasters avail themselves of the option to turn live chat reply off — so after the fact, you can’t see what people paid to say during a stream. And there are no tools that allow one to track comments on a larger scale.

O’Connor also points out that while YouTube recommends the assignment of moderators for live, “high-traffic events,” and offers some automated filtering services, channel owners commonly forgo the implementation of those safeguards. Indeed, it appears to be in the mutual interest of these popular hosts and the Google-owned social network to let people spout off in the chat. While banning accounts that propagate the same conspiracy theories and extremist ideology in videos, YouTube can sneakily profit from it via this sideline, which is part of a growing segment of non-advertising revenue. As trolls discover that $5 can get them the spotlight for a prohibited statement that won’t be removed, the practice may spread.

Without an incentive or pressure to stanch this flow of toxic but lucrative material, it’s unclear whether YouTube will do so. We’ve already seen how the site’s algorithm can radicalize users by funneling them from “intellectual dark web” videos to more violent and hateful stuff. In fact, we’ve yet to see any tech company really get a handle on the dangerous extremism that flourishes throughout the web. Sometimes, as with far-right “free speech” apps, that’s by design.

But for the likes of Facebook, Twitter and YouTube, it often looks to be plain old indifference.