Alireza Dehghan learned how to code when he was only 11, but not long after, he started to struggle with depression. By the time he was a young adult, Dehghan was finally diagnosed with post-traumatic stress disorder and needed help. The problem was that all of the pain that contributed to his psychological issues also made it impossible for him to trust anyone.

“I couldn’t tell the psychologist the whole truth because at the end of the day he was a human, and humans talk to each other. I didn’t feel completely private and secure to talk,” Dehghan tells me. “This is the case with a lot of people — some people can’t talk because what they want to say might be embarrassing, therefore a diagnosis will become difficult or inaccurate.”

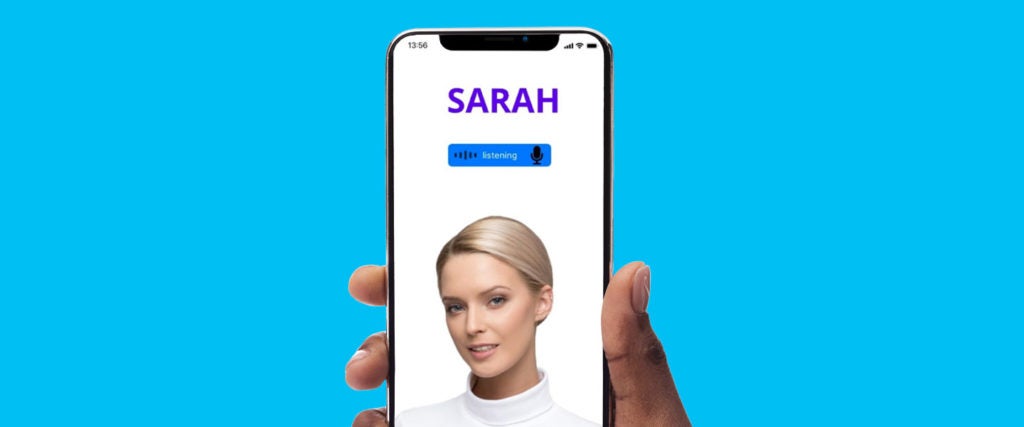

Finding a therapist and waiting weeks for an appointment when you’re in crisis are hard enough as it is, so Dehghan decided to look to technology for help instead. Consequently, last April, a month into the pandemic, Dehghan launched SARAH, the world’s first artificially intelligent therapist.

Unlike Woebot and other mental health chatbots that market themselves as therapeutic tools designed to be used in conjunction with traditional counseling, SARAH is more for people who no longer trust the therapeutic process. In order to perform therapy, SARAH uses deep learning to create artificial neural networks that mimic the inner workings of the brain. When you talk about an argument with a friend or a problem at work, SARAH calls on a massive knowledge database written by 10 trained psychologists on staff to take you through one of many decision trees, until you eventually arrive at a solution or diagnosis.

The deep-learning function allows SARAH to discover more about a person the more they talk, similar to how a therapist would in real life, which would theoretically improve the experience. To Dehghan, this is the most exciting feature of SARAH, along with “emotion detection and intent recognition,” he says. “These are the elements that are important in a human therapist, to remember previous conversations and get personalized with you, to detect emotions and react to them, and to recognize the intent of a conversation.”

As for the name, Dehghan says he landed on SARAH because it’s universal. “It’s used from China to the Middle East to the U.S.” In other words, everyone knows a SARAH and that might make her feel a little less like a robot, along with its speech generation and animation feature. That said, SARAH is still like a robot in the best possible ways: She’s compliant with the EU’s General Data Protection Regulation and doesn’t record any conversations. Dehghan is strongly against selling ads or user data for any reason as well, and insists anything you tell SARAH stays between you and her.

“One of the foundations of SARAH the therapist for me personally was privacy,” Dehghan says. “The fact that I can confide in Sarah and tell her everything that is real, because she isn’t a human, she won’t make fun of me, judge me or gossip about me.”

It’s important to note that SARAH isn’t yet available to consumers, and after launching a crowdfunding campaign over the summer, Dehghan is currently working on getting more seed investors to bring SARAH to final form. Still, he insists that “our system’s architectural design is finished.”

Unsurprisingly, practicing therapists have concerns about the idea of AI therapy, but they’re less worried about their job security and more worried about people’s safety when they’re really struggling, as well as the overall efficacy. Because for many clinicians and clients alike, the secret ingredient to therapy working is human connection. “There is a human element of therapy that’s unquantifiable but crucial to the process of healing,” Ben Fineman, a psychotherapist and host of the podcast Very Bad Therapy, explains. “Human beings coregulate one another — that’s as relevant to successful therapy as anything else.”

Randy Withers, therapist and founder of the blog Blunt Therapy (no, not those kinds of blunts), agrees. “While a machine can be programmed to quote Freud, perform psychoeducation and even to adhere to ethical standards such as confidentiality, it will never be able to be present, empathize or self-disclose in ways that are therapeutically appropriate,” Withers says, adding that good therapy is 80 percent relationship and 20 percent skill. “In order for a relationship to exist, two living, breathing beings have to be present.”

Dehghan has no plans for SARAH to take the place of therapists, though — rather, it’s designed to help people who don’t have access to therapy and those who trust technology more than humanity. “SARAH the therapist is committed to making professional therapy affordable, available and accessible,” Dehghan says, insisting that having more options is a positive in and of itself. “People still ride horses after the invention of the car. It just depends on how they feel toward it.”