It took ArcturusXXXIII two clicks to end up on a Tumblr page filled with apparent child porn. They say they’d simply gotten a follow, opened the page and spotted a repost of a questionable image. When they opened the page it came from, they saw graphic images with hundreds, even thousands of likes.

Arcturus reported the content and got an automated response. Then they tried to contact abuse@tumblr.com, but that email was no longer in use. They waited four days, and nothing happened — the images were still there when they checked. Fed up, Arcturus wrote an open letter about the “hotbed of child pornography” on Tumblr, tweeting it at Tumblr founder David Karp, CEO Jeff D’Onofrio and parent companies Verizon and Oath. “Ignoring the problem, as you have done for so many years, is clearly not working,” they wrote.

Three days later, shit hit the fan. First, Tumblr vanished from the App Store, reportedly over its child porn problem. Then came the announcement that rattled the internet: Tumblr would place a blanket ban on all adult content.

Now, the day of reckoning has come and gone — December 17th, the day Tumblr said it would be purging the site for good, putting an end to what was, for many, the last safe space to be horny online. Countless people detailed how the site provided a unique platform for sex workers, kinky folk and LGBTQ people to connect on all things explicit. (Coincidentally, the shutdown took place on the International Day to End Violence Against Sex Workers.) On the shutdown day, users staged a “#logoffprotest,” pledging to boycott Tumblr for 24 hours to show their displeasure with how the ban harms NSFW creators. They cited an ineffective flagging system that deems innocuous content explicit and, largely, leaves Nazis alone.

But 14 longtime Tumblr users tell MEL the site had far bigger problems than “female-presenting nipples“: exploitative, abusive and illegal content, which they spent years reporting and flagging, to no avail. The dark side of Tumblr was always two or three links away: a hotbed of seemingly illegal content, from minors posting nudes to overt bestiality and reblogged children in fetish hubs. Users say the site continually failed to respond adequately to reports, and that it had plenty of time — and advance warning — to correct the problem before selecting the nuclear option. And in doing so, users say, Tumblr failed the communities that needed it most.

this is not news, users have been yelling at tumblr for years to get on shit like child porn, white supremacists and pedophile grooming blogs and tumblr's completely refused to do so bc effort, now they're burning it all down bc they're still too lazy to investigate reports

— ? (@RaygunCourtesan) December 4, 2018

tumblr: “sorry we can’t deactivate these specific child porn and nazi blogs you’ve brought to our attention several times it’s too complicated ://“

also tumblr: “we’re gonna delete every single tiddy on the entire site by this date”

— a jar of spiders in his car (@devinlucifer) December 3, 2018

‘I Would Straight-Up Tell People I Was 16 Years Old’

Comic writer Leah Williams writes in a Twitter thread that she “distinctly remember[s] trying to report the way predators were using Tumblr to staff years ago” but “Tumblr wouldn’t do anything about it.” She made a Tumblr page in 2014 gathering predatory screenshots, mostly of a user who would reblog photos of young children or teenagers onto his BDSM porn blog, often adding suggestive or sexualized comments without the consent of the original poster.

Also in 2014, a Tumblr user named Em posted nudes on her blog. She was 16 at the time, and said so — but not only did her photos stay up, they were reblogged wildly. “I would straight-up tell people I was 16 years old and people literally didn’t care,” she admits to MEL. “I never got in trouble, I never had my blog shut down.”

Porn blogs sexualizing children and minors posting nudes are just a sampling of the illegal content users tell us they found on Tumblr. Users also identified revenge porn, bestiality (an issue that also plagued YouTube and wasn’t adequately handled until after a BuzzFeed investigation in April), porn bots and blogs self-identifying as MAPs (minor-attracted persons) or pedophiles who say they won’t contact minors but still make text posts sexualizing children.

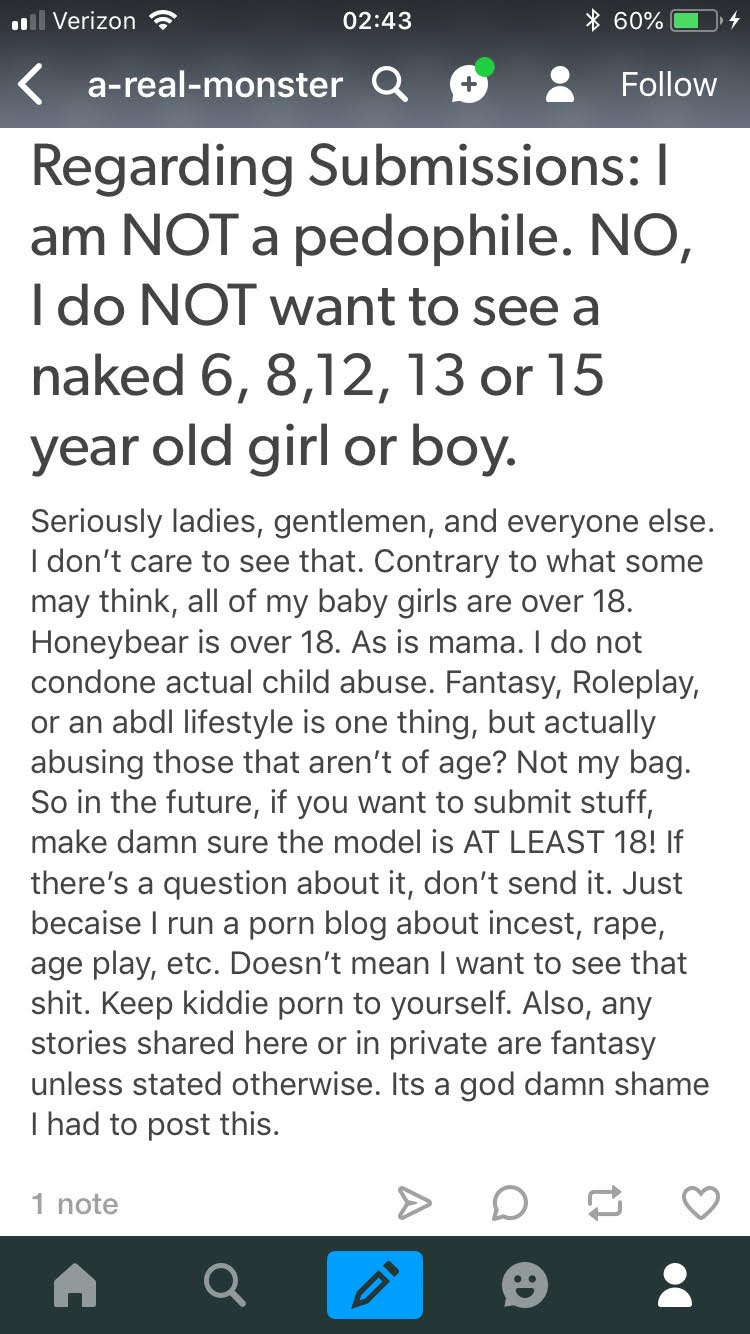

Some users tell MEL they were able to get questionable content removed, but others articulate frustrations with Tumblr’s reporting process. Tumblr user a-real-monster, who ran a kinky porn blog they deactivated on their own accord last week, says they’d often encounter “questionable” user submissions to their blog and would have to report them “daily, until it eventually stopped.”

One anonymous Tumblr user, who spent two years on the site, says he “encountered illegal content on the regular,” predominantly involving what he identified as minors trying to sell explicit videos. He would often attempt to report the content, but he says he experienced logistical problems on the mobile app, such as having to log in again and being unable to generate a URL of the post. (A test report indicates this is no longer an issue.)

When he sent reports, he says, “Tumblr would rarely respond.” If someone did, “they’d say they didn’t see anything wrong with it. … Overall, the experience was just a hassle and not at all effective.”

I'm sorry, but I guarantee if I clicked through some of the nasty porn blogs that follow me, I could find child porn on tumblr in less than five minutes easily. This has been a problem for the entire nine years I've used this website, and your useless report feature doesn't help. pic.twitter.com/LAv5g55FbW

— Hannah (@hannahisdumb) December 3, 2018

‘Tumblr Clearly Didn’t Know How to Deal With This’

Another anonymous user who draws explicit art says they blocked tags like “underage” and “pedophile” and still found “explicit pornographic fanart of underage teens” in their feed’s recommendations.

Tumblr user fuckedupdaddy, who runs a porn blog with 3,000 followers centered around BDSM, incest fantasies and age play, says that as he amassed followers, he began noticing a significant number of pedophile blogs following him, to the extent that he posted about it.

“I have a huge age-play kink, but I like those games with adults and consent, not with kids,” he says. He would always report this content, but he “kept running into it,” even seeing the same images repeatedly — likely because when a blog is deleted, reblogs of the images from the deleted account remain active. “Tumblr clearly didn’t know how to deal with this. If they had decent software with image recognition, it should be easy to find and remove the same pictures.”

Tumblr says it uses “a mix of machine-learning classification and human moderation from our team of trained experts” to respond to reports. In a November statement, the company said it works with organizations like the National Center for Missing and Exploited Children to “actively monitor content uploaded to the platform,” and that “every image uploaded to Tumblr is scanned against an industry database of known child sexual abuse material, and images that are detected never reach the platform.” Tumblr did not respond to MEL’s request for comment.

‘They’d Rather Have Ads Beside Nazis Than Ass’

“I’ve reported lots of minors who attempt to enter the sexual community, but I never received confirmation of any sort that something was done,” says Melody Orenda, a 22-year-old sex worker who says she’s seen “way too much” illegal content on Tumblr. “They should have a team of real people working on this, not a terrible photo reading algorithm.” Now the system has gone haywire, she says: All the content on her blog is currently flagged as explicit, even fully clothed photos.

As Leah Williams pointed out in her Twitter thread, major websites often don’t act on problems like this until their profit is threatened. That may have been the case here: A former Tumblr engineer told Vox the porn ban had already been in the works due to difficulties selling ads next to explicit content, but Arcturus’ child porn letter made Verizon act sooner.

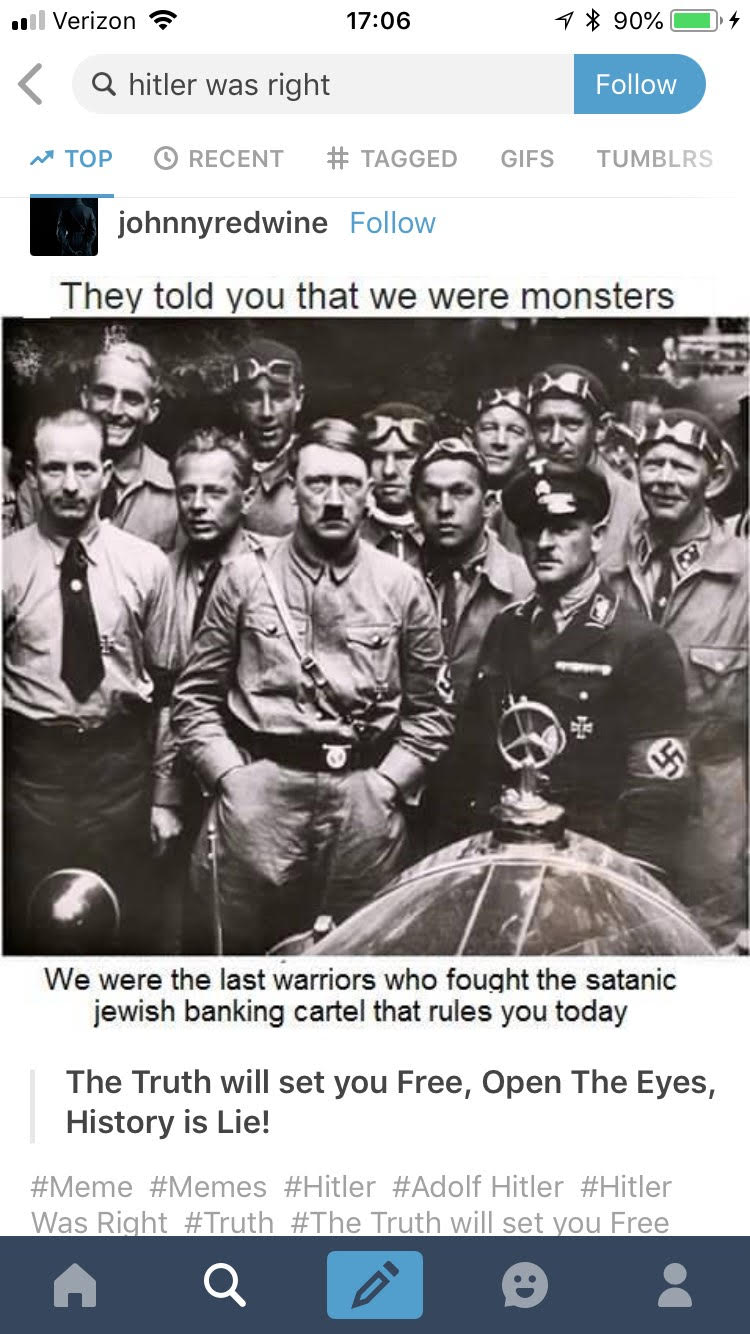

Still, the reporting problem didn’t just extend to illegal porn, users say. Several people tell me they’re frustrated with Tumblr for failing to moderate graphic self-harm content, as well as Nazism and white supremacy — issues that also pervade Twitter. Currently, if you search “boobs” on Tumblr, it returns zero posts. As of Tuesday, December 18th, searching “white pride,” “Hitler was right” and the far-right, anti-immigrant term “defend Europe”still yields hundreds of results.

“It seems as if [Tumblr is] ignoring every issue on their site and simply banning porn,” says Michelle, a sex worker on Tumblr. “I guess they’d rather have ads beside Nazis than ass.”

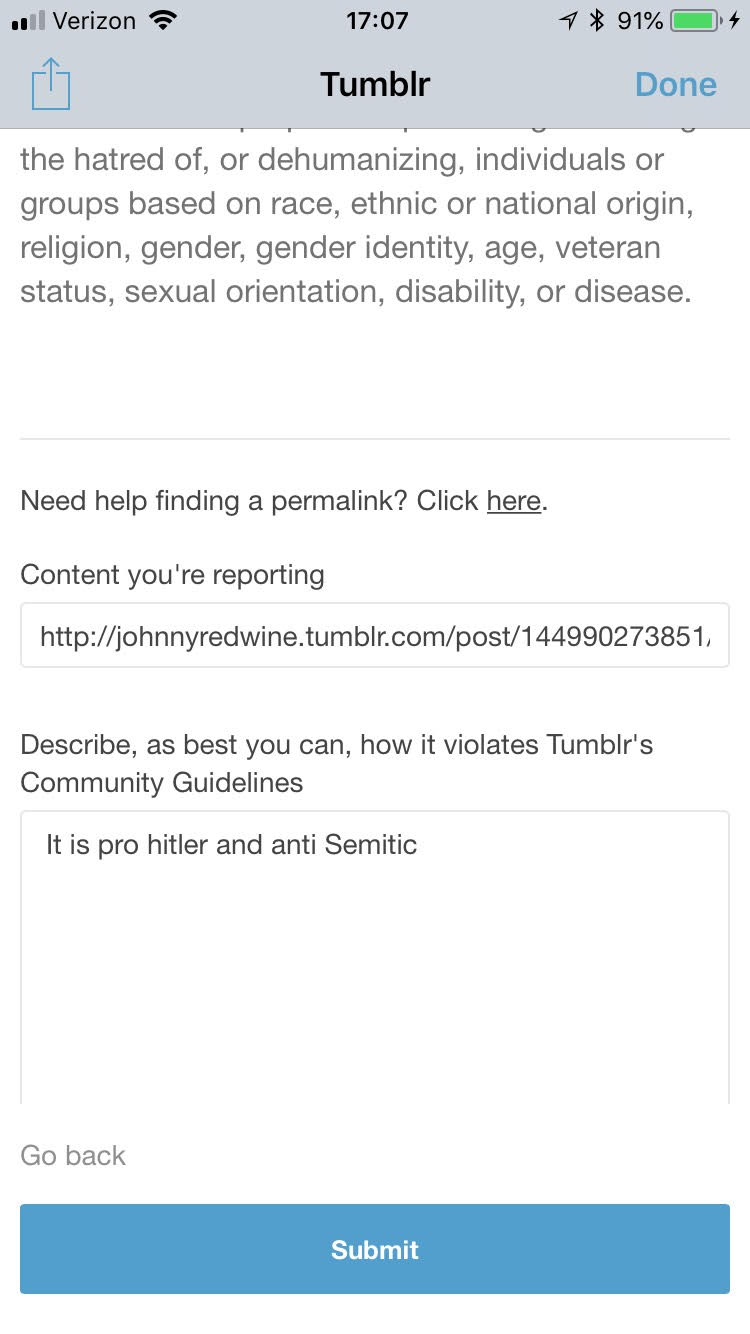

When I try to report one pro-Hitler post on mobile — it clearly violates Tumblr’s rules against hate content — it’s a challenge to find the correct report button. Then I go through nine rounds of image-based CAPTCHAs, only to receive an automated email that reads, “Please don’t ask your friends to report the same issue.”

Tumblr's report button is hidden behind a Share button, so to report illegal images (like child porn) you have to first click Share ?

Perhaps the lack of proper reporting was because you were expecting normal users to click 'Share' on child porn? pic.twitter.com/ERI1FLsbkC

— Josh Ling / Tactful (@tactful) December 4, 2018

Why Social Media’s Moderation Problem Never Ends

Kat Lo, a University of California, Irvine, researcher who studies content moderation, says this tendency to filter out adult content while appearing more lenient on hate speech and Nazis is multifaceted. “There is a legacy of established standards for policing sex work, on top of cultural stigma and the historical marginalization of sex workers,” she says, noting that the payment processors, advertisers and app stores working with social media sites tend to be stricter about adult content.

Moderating this is “less risky” and “much less expensive” when there’s a blanket ban on sex, Lo says. But why not the hate speech? Companies “have more precedence and better technology developed for porn,” she adds, while companies cracking down on white supremacy may wind up entangled in a complicated free speech debate.

Banning porn isn’t Tumblr’s first attempt at managing the site’s explicit content. In February 2018, after being sold to Yahoo, they introduced a “Safe Mode” that filtered out NSFW content. This became the default for all users unless they opted out, and anyone without an account was stuck in permanent Safe Mode. Adult-content creators said it was negatively affecting their work. And there were plenty of problems even then, Dazed reported: Safe Mode was “flagging non-explicit content yet showing highly explicit works in the search results.”

Content moderation on large social media platforms has always been tricky. Human moderators are smarter, but viewing disturbing content all day can take an immense psychological toll. Artificial intelligence lessens the human cost, but it’s far from perfect. The AI Tumblr used to flag adult content was widely lampooned for flagging content like a photograph of Mr. Rogers soaking his feet or drawings featuring flesh-toned colors.

“Many disastrous consequences of these systems have emerged from content moderators unable to engage with context around the posts they must make decisions about,” says Lo. For example, a photo of a child wouldn’t violate the rules. That same photo of a child reblogged onto a porn page with a sexualized comment should violate the rules, but only if context is considered.

Lo says instead of viewing commercial content moderation as “unskilled labor that can be replicated consistently at a massive scale,” it should be seen as “highly skilled work.” She cites moderators of large Reddit communities as a worthy example: They “have been able to create tools that leverage what computation can handle reasonably well,” which “frees up moderator time and energy” to lend a human eye to more challenging cases, without being constantly bombarded with disturbing images.

ArcturusXXXIII says the time for Tumblr to step up and police its platform passed a long time ago. “There is so much illegal content on there that I really think removing all traces of porn was their only viable option.”

Lo says a social media platform that allows a wide scope of adult content while successfully filtering out illegal content would be possible, “but it would require a lot of very expensive, highly skilled moderation work and an absurd amount of upfront resources put into policy-building, infrastructure and tooling to support moderation that can both act quickly and with nuance.” But when websites like Twitter are still relying on unpaid moderation from journalists and users to figure out what to delete, it seems unlikely a large-scale platform would be willing to shell out for this.

Steve, who makes free, consent-focused kinky porn on his Tumblr theruleset, is even less optimistic.

“It’s disheartening to feel like you’re part of some Gordian knot people won’t be able to solve,” he says.