April 1 might be officially known as April Fool’s Day, but anyone who has ever known the shame of falling for an elaborate prank knows it’s more like Constant Vigilance Day. It’s a holiday (“holiday”) that reminds us to question the reliability of our closest friends and family, favorite publications or trusted brands, lest we fall victim to one of their pranks.

But in the digital era, when so much information is thrown at us every day, it seems odd that we should save only one day each year for keeping our bullshit detectors on high alert. Bad information dresses up as legitimate 365 days of the year, and even the most trusted monoliths of factual truth, from newspapers to scientific journals, can get it wrong.

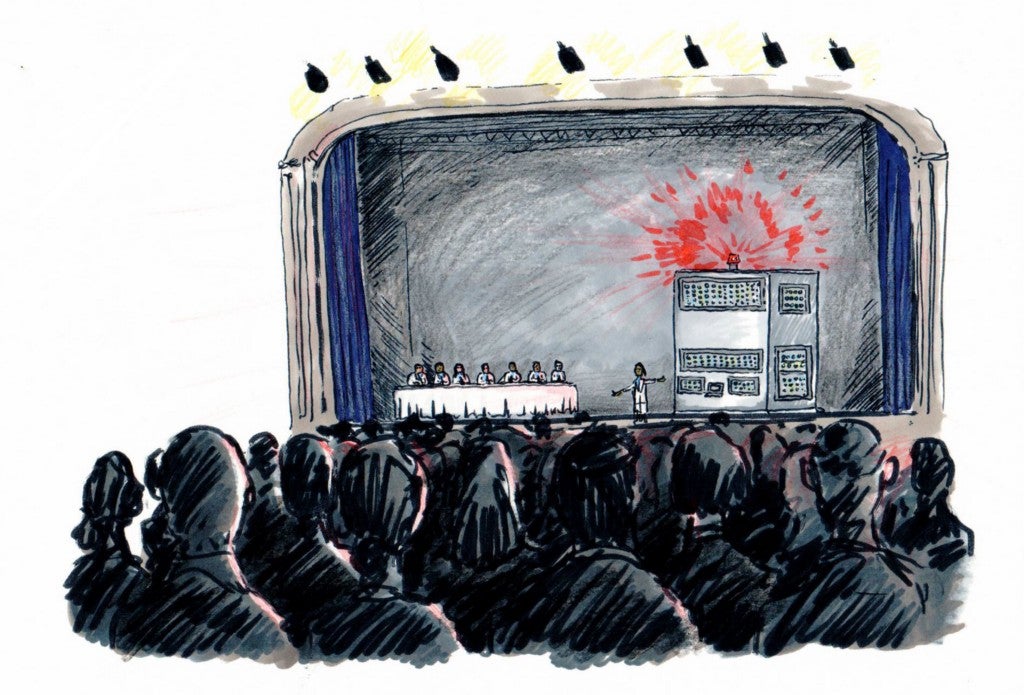

Consider the paper “Chocolate With High Cocoa Content as a Weight-Loss Accelerator” published in the serious-sounding journal International Archives of Medicine. The too-good-to-be-true research went viral and was publicized by media outlets around the world, until the author ultimately admitted it was a hoax designed to draw attention to the wide proliferation of fake science.

But even when scientific methods are honest in intent, science “can yield false results,” according to Pacific Standard’s Michael Schulson. “The ways that researchers frame questions, process data, and choose which findings to publish can all favor results that are statistical aberrations — not reflections of physical reality.” Dwindling public funding to replicate previous studies, a lack of scrutiny in the science press and the pressure scientists feel to draw flashy conclusions from their work only make the problem worse. So how are we supposed to separate “undeniable fact” from “let’s get a second opinion” and “LOL, yeah right”?

Here are a handful of red flags to watch out for to keep you from getting April-fooled by “science” — even when it’s not April.

The first study’s not the deepest

First rule: trust no one study. That’s not to say a single study can’t suggest or discover something new, or even disprove a widely believed notion about the universe as we know it (like this study that disproved the idea that facial expressions are universal). But one paper doesn’t make for good science. The key to establishing a scientific fact (or as close to “facts” as humans can get, anyway) is replicability: the more people who can get the same results with the same strategy, the more likely that discovery is rock-solid.

Be skeptical of headline-making, astounding conclusions

To be safe, you can probably just throw any diet study out the window the minute you come across it. Most of these — chocolate helps you lose weight, alcohol makes you live longer, etc. — are probably not founded in a whole lot of scientific rigor, both because the conclusions seem to apply to everyone, and because we want to believe that doctors and scientists have always been wrong about the stuff that’s bad for us. Another version of this red flag: findings that seem to reinforce society’s preconceived notions about normalcy — think scientific racism or BMI as a measure of a person’s health.

Check the sample size

“Here’s a dirty little science secret,” wrote John Bohannon, the science writer behind the chocolate-helps-weight-loss hoax. “If you measure a large number of things about a small number of people, you are almost guaranteed to get a ‘statistically significant’ result.” In other words, when your sample size isn’t large, it’s impossible to make sound generalizations about that sample’s ability to accurately portray the larger population. It’s even worse when, like in Bohannon’s study, the participants vary dramatically in age, gender, race, religion, nationality or any other background factors, and even worse when you’re taking many different measurements from them. When you seek answers from a small, nonspecific group, “data” is pretty much open to any interpretation you want to see.

Note the methodology

This one should be easy for anyone who’s ever purposefully underreported their alcohol consumption to their doctor — everybody lies. Even if every person in a study’s test group is telling the truth, everyone brings different life experiences and preconceived notions to how they interpret their experience, which makes inconsistencies a given. Added up, a lot of tiny inaccuracies make for one big blind spot — and extremely shaky conclusions — even if all you’re measuring is a population’s voting preferences.

Consider the source

Thanks to the internet, people and ideas don’t have to rely on the whims of judgmental gatekeepers to gain traction with an audience anymore. This is great for amateur musicians and self-publishing authors, but when it comes to science, this evolution can have pretty catastrophic effects. First, it allowed for the rise of open-access journals. Although these publications nobly make scientific research available to readers for free, they lack the scrutiny of established academic publications, including peer review. (Case in point: the same guy who did that chocolate study also managed to get B.S. studies published in over half the open-access journals he submitted to.)

Second, because public money has become so hard to come by in academia and scientific research, the rise of research funded by private individuals and companies with their own agendas means that today’s scientific research comes with more political baggage than ever. (This staggering 2014 New York Times piece outlining the crisis is absolutely worth a read.) Just to be safe, it’s best to treat your science news as you would a celebrity death hoax or a seemingly satirical headline: save some face by doing a few Google searches before sharing that article.

Devon Maloney is an L.A.-based writer. She previously wrote about whether or not millennials will ever get to retire.